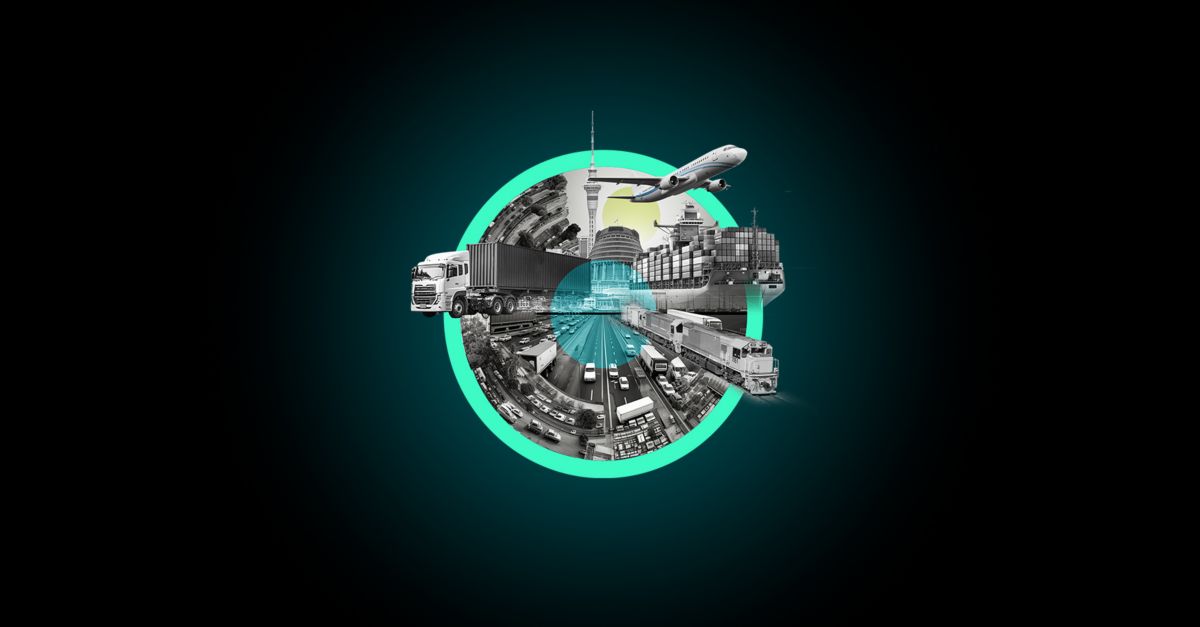

Bussiness

OpenAI researcher resigns, claiming safety has taken “a backseat to shiny products”

/cdn.vox-cdn.com/uploads/chorus_asset/file/24390406/STK149_AI_03.jpg)

Jan Leike, a key OpenAI researcher who resigned earlier this week following the departure of co-founder Ilya Sutskever, posted on X Friday morning that “safety culture and processes have taken a backseat to shiny products” at the company.

Leike’s statements came after Wired reported that OpenAI had disbanded the team dedicated to addressing long-term AI risks (called the “Superalignment team”) altogether. Leike had been running the Superalignment team, which formed last July to “solve the core technical challenges” in implementing safety protocols as OpenAI developed AI that can reason like a human.

The original idea for OpenAI was to openly provide their models to the public, hence the organization’s name, but they’ve become proprietary knowledge due to the company’s claims that allowing such powerful models to be accessed by anyone could be potentially destructive.

“We are long overdue in getting incredibly serious about the implications of AGI. We must prioritize preparing for them as best we can,” Leike said in follow-up posts about his resignation Friday morning. “Only then can we ensure AGI benefits all of humanity.”

The Verge reported earlier this week that John Schulman, another OpenAI co-founder who supported Altman during last year’s unsuccessful board coup, will assume Leike’s responsibilities. Sutskever, who played a key role in the notorious failed coup against Sam Altman, announced his departure on Tuesday.

“Over the past years, safety culture and processes have taken a backseat to shiny products,” Leike posted.

Leike’s posts highlight an increasing tension within OpenAI. As researchers race to develop artificial general intelligence while managing consumer AI products like ChatGPT and DALL-E, employees like Leike are raising concerns about the potential dangers of creating super-intelligent AI models. Leike said his team was deprioritized and couldn’t get compute and other resources to perform “crucial” work.

“I joined because I thought OpenAI would be the best place in the world to do this research,” Leike wrote. “However, I have been disagreeing with OpenAI leadership about the company’s core priorities for quite some time, until we finally reached a breaking point.”

:max_bytes(150000):strip_icc()/roundup-writereditor-loved-deals-tout-f5de51f85de145b2b1eb99cdb7b6cb84.jpg)